Lecture 18

Designing Experiments

Designing Experiments

ABD 3e Chapter 14

Learning Objectives

- Distinguish between association and causation and explain why observational studies cannot establish causation

- Define confounding and explain how it biases inference

- Describe how controls, random assignment, and blinding reduce bias in experiments

- Identify experimental units and distinguish true replication from pseudoreplication

- Explain how replication, balance, and blocking reduce sampling error and improve precision

- Interpret factorial designs and explain how interactions between factors arise

- Describe how matching and adjustment reduce confounding in observational studies

- Explain how sample size is chosen to achieve desired precision or statistical power

Experiments

Observations Are Not Enough

- Observational studies

- Reveal patterns in real-world data

- Researchers observe and measure variables as they naturally occur

- But patterns alone do not tell us why they occur

- Association: two variables change together

- Causation: a change in one variable directly produces a change in another

- Multiple explanations can produce the same association

- Association does not imply causation

Why Causal Inference Is Hard

- Confounding: a third variable influences both variables

- Directionality: cause and effect can be reversed

- These problems cannot be resolved with more data alone

- We need a way to isolate the effect of a single factor → experiments

Example: When Observational Studies Mislead

Observational studies suggested that hormone replacement therapy (HRT) reduced heart disease risk in women (Stamfer et al. 1991 New England J Med)

Women taking HRT had lower rates of heart disease

Conclusion (at the time): HRT protects against heart disease

What Was the Problem?

- Women who chose HRT differed in important ways:

- Higher socioeconomic status

- Better access to healthcare

- Healthier lifestyles overall

- These differences (confounders) also reduce heart disease risk

What Happened in an Experiment?

A randomized trial (Women’s Health Initiative) assigned HRT randomly

Result: HRT did not reduce heart disease risk (and increased some risks)

The original association was due to confounding, not causation

What Is an Experiment?

- A study where researchers actively impose conditions on a system

- Researchers assign different conditions (treatments) to experimental units

- Outcomes are then compared across those conditions

- This allows us to isolate the effect of a specific factor

Why Experiments Work

- Experiments are designed to support causal inference

- By controlling how conditions are assigned, we reduce alternative explanations

- Properly designed experiments break the link between confounders and treatment

- Differences in outcomes can be attributed to the treatment

Key Idea

- Observational studies measure what already exists

- Experiments create conditions for comparison

- This is what allows us to move from association → causation

Eliminating Bias

Be Wary of Bias in Your Design

- Biased experiments produce biased conclusions

- They tell you about your design, not the real world

- Bias must be addressed before data are collected

- It cannot be fixed afterward

- Large \(n\) does not solve bias

- It can make biased results more convincing

Design Features That Reduce Bias

- Three core strategies:

- Controls

- Provide a baseline for comparison

- Random assignment

- Breaks the link between confounders and treatment

- Blinding

- Prevents subjects and researchers from influencing outcomes

- Controls

Eliminating Bias: Strategy 1 — Use Controls

- A control group provides a baseline for comparison

- Control units are treated as similarly as possible to treatment units

- Except for the treatment itself

- Except for the treatment itself

- This allows us to isolate the effect of the treatment

- Without a control, we cannot determine cause and effect

What Makes a Good Control?

- A good control matches the treatment group in all relevant ways

- The only systematic difference should be the treatment

- Poor controls introduce new differences (new confounding)

- Doing nothing is not always an appropriate control

Control Example: Placebo

Outcomes can change simply because a treatment is given

A placebo mimics the treatment without an active ingredient

Good placebo: indistinguishable from the treatment

Bad placebo: differs in noticeable ways (e.g., taste, side effects)

Why Controls? Example: Independent Recovery

People often seek treatment at their worst.

Therefore, people often see their doctor when they are on their way to recovery.

To measure the effects of a new therapy, we need a comparable control group.

Eliminating Bias: Strategy 2 — Random Assignment

- Random assignment: assign treatments to units by chance

- This breaks the link between confounders and treatment

- Known and unknown differences are balanced on average across groups

- Prevents systematic differences between groups

Why Random Assignment Matters

- Without randomization, group differences can reflect confounding

- Non-random assignment can create bias

- Example: assign treatment by last name

- May group family members or cultural backgrounds together

- Creates systematic differences between groups

- Randomization prevents these patterns

How to randomly assign

- Identify the experimental units

- Assign treatments using a random process

- Goal: each unit has an equal chance of receiving each treatment

- In practice:

- Use a random number generator

- Use software (e.g., R)

- 1

-

Create a tibble (data frame) with one variable

idwith values of 1 through 10 - 2

- Modify the variables in the tibble

- 3

-

Add a new

treatmentvariable using thesamplefunction, which randomly draws values - 4

-

The values should consist of either

controlortreatment - 5

-

n()ensures the number of values in the sample matches the number of rows in the tibble - 6

-

replace=TRUEensures true random assignment (which means it allows unequal counts)

Results of random assignment

- Randomization does not eliminate confounders

- It removes systematic bias by breaking their association with treatment

- Remaining differences are due to chance (sampling error)

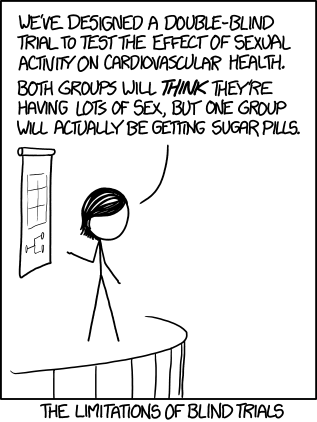

Eliminating Bias: Strategy 3 — Blinding

- Blinding: keeping participants and/or researchers unaware of treatment assignment

- Prevents expectations from influencing outcomes

- Participants may respond differently if they know their treatment

- Researchers may treat or measure subjects differently

- Reduces bias introduced during data collection

Types of Blinding

- Single-blind: participants do not know their treatment

- Prevents subject expectations from influencing outcomes

- Double-blind: neither participants nor researchers know

- Prevents both subject and researcher bias

- Stronger blinding → less opportunity for bias

Why Blinding Matters

- Knowledge of treatment can influence:

- Behavior

- Reporting of symptoms

- Measurement of outcomes

- Unblinded studies often show larger effects

- These may reflect bias, not true treatment effects

Blinding in Practice

Sampling Error

Reducing sampling error improves precision and power

- Even unbiased experiments have variability among individuals (“noise”)

- This variability creates sampling error in estimates

- Sampling error reduces:

- Precision of estimates

- Power to detect treatment effects

Holding conditions constant reduces noise but limits generality

- Reduce noise by keeping conditions constant:

- Environment (e.g., temperature, humidity)

- Participant characteristics (e.g., age, sex, genotype)

- Tradeoff:

- More control → less variability

- But results may not generalize broadly

Overly narrow conditions can create bias in applicability

- Restricting study populations limits who results apply to

- Example:

- Many clinical trials historically included only men

- Results were applied broadly, including to women

- Design decisions affect external validity

Key design strategies reduce sampling error

Key design strategies:

Replication

Balance

Blocking

Using extreme treatments

Goal: reduce noise without sacrificing generality

Replication is essential to separate signal from noise

- Replication: applying each treatment to multiple experimental units

- Without replication:

- Cannot distinguish treatment effects from random variation

- More replication:

- More information

- Better estimates

- Higher power to detect real effects

Replication depends on independent experimental units

- Replicates must be independent units

- Experimental unit:

- The unit assigned a treatment independently

- Examples:

- Individual organism (if assigned independently)

- Group units: plot, cage, household, petri dish

- Key rule:

- Individuals within the same unit are not independent

Replication is not just “more individuals”

- Multiple organisms ≠ multiple replicates

- If organisms share the same environment:

- They are more similar to each other

- They count as one replicate

- Must identify the correct experimental unit:

- Critical for design and analysis

Example: Which designs are truly replicated?

- Two growth chambers:

- Control vs Treatment (different light)

- Multiple plants per chamber

- Share the same environment

- Experimental unit = chamber, not plant

- One chamber per treatment → no replication

- Lighting differs between chambers

- Cannot separate treatment from chamber effect

Interspersion signals proper replication

- Proper replication shows interspersion:

- Treatments mixed across units

- Result of random assignment

- Lack of interspersion:

- Warning sign of design problems

- Likely non-independence

Pseudoreplication leads to false precision

- Treating non-independent units as independent = pseudoreplication

- Examples:

- Treating plants within a chamber as separate replicates

- Repeated measurements on same individual

- Consequences:

- Standard errors too small

- Overconfidence in results

Why replication reduces standard error

\[ \operatorname{SE}_{\bar{Y}_1 - \bar{Y}_2} = \sqrt{s_p^2 \left(\frac{1}{n_1} + \frac{1}{n_2}\right)} \]

- \(n_1\), \(n_2\) = number of independent replicates per treatment

- Increasing sample size ↓ standard error

- Lower standard error → clearer detection of differences

Replication has practical limits

- Increasing sample size improves inference

- But comes with costs:

- Time

- Money

- Ethical considerations (e.g., animal use)

- Goal:

- Sufficient replication to detect meaningful effects

Balanced designs minimize sampling error

Balanced design = equal sample size in each treatment

Unbalanced design = unequal sample sizes

For a fixed total sample size:

- Standard error is smallest when group sizes are equal

- Balance optimizes precision of comparisons

Why balance improves precision

\[ \operatorname{SE}_{\bar{Y}_1 - \bar{Y}_2} = \sqrt{s_p^2 \left(\frac{1}{n_1} + \frac{1}{n_2}\right)} \]

- For fixed \(n_1 + n_2\):

- SE minimized when \(n_1 = n_2\)

- Example (total \(n = 20\)):

- Balanced: \(n_1=10\), \(n_2=10\) → smaller SE

- Unbalanced: \(n_1=19\), \(n_2=1\) → much larger SE

- Estimating a difference requires:

- Precise estimate of both means

- Unbalanced design:

- One group well estimated

- Other poorly estimated → weak comparison

- Balance allocates effort efficiently

Balance is optimal but not strictly required

- Increasing sample size improves precision:

- Even if added to only one group

- But for a fixed total sample size:

- Equal allocation is optimal

- Additional benefit:

- Statistical methods are more robust

- Especially when variances differ between groups

Blocking reduces noise from known sources of variation

Blocking: group similar experimental units into blocks

Units within a block:

- Share location or other characteristics

- Are more similar to each other than to units in other blocks

Goal:

- Remove variation not caused by the treatment

- Increase precision and power

How blocking works

Within each block:

- Assign treatments randomly

- Treatments are interspersed within the block

Analyze differences within blocks, not across all units

Conceptually:

- Repeat the same experiment in each block

- Compare treatments under similar conditions

When is blocking useful?

- Use blocking when:

- Units differ due to known factors (e.g., location, time, group)

- Examples of blocks:

- Field plots in the same area

- Animals from the same litter

- Patients from the same clinic

- Experiments run on the same day

- Key condition:

- Units within blocks are similar

- Blocks differ from each other

Example: Extreme treatments reveal nitrogen effects

Clark and Tilman (2008) studied whether nitrogen addition reduces plant diversity

Typical (background) N deposition: ~1–10 kg N ha⁻¹ yr⁻¹

Experimental treatments: Up to 100 kg N ha⁻¹ yr⁻¹ (extreme)

Why use extreme levels?

- Amplify the response

- Make treatment effects easier to detect

Result: Clear decline in species richness with higher N

Extreme treatments make effects easier to detect

Treatment effects are easiest to detect when they are large

Small differences:

- Hard to distinguish from random variation

- Require larger sample sizes

Large differences:

- Stand out against noise

- Increase power to detect an effect

Strategy:

- Include extreme treatment levels

Extreme treatments increase power, but with tradeoffs

- Stronger treatments → larger response differences

- Higher probability of detecting an effect

- Useful as a first step:

- Does this variable affect the response at all?

- Caution:

- Effects may not scale linearly

- Extreme treatments may not reflect realistic conditions

- Balance:

- Detection vs realism

Experiments with More Than One Factor

Experiments can include more than one factor

- A factor:

- A single treatment variable of interest

- Many experiments include multiple factors

- More efficient:

- Answer multiple questions at once

- Use time, materials, and effort more effectively

- More efficient:

- Example idea:

- Temperature + nutrients

- Light + water

Factorial designs test combinations of factors

- Factorial design:

- Includes all combinations of treatment levels

- Example structure (2 factors):

- Factor A: A₁, A₂

- Factor B: B₁, B₂

- Treatments:

- A₁B₁, A₁B₂, A₂B₁, A₂B₂

- Key advantage:

- Can test interactions between factors

| Factorial design: two factors with two levels each | ||

Variable B

|

||

|---|---|---|

| B₁ | B₂ | |

| Variable A | ||

| A₁ | A₁B₁ | A₁B₂ |

| A₂ | A₂B₁ | A₂B₂ |

Interactions: when effects depend on each other

- Interaction: Effect of one factor depends on another factor

- Without interaction:

- Effects are independent and additive

- With interaction:

- Combined effect differs from separate effects

- Only detectable with factorial design

- Examples of types of interactions (Duda et al. 2023)

Example: 4-factor factorial experiment (smoking reduction)

Study in Cook et al. (2015) Addiction

Outcome: % reduction in cigarettes/day

4 factors (2 levels each: yes vs no):

- Nicotine patch

- Nicotine gum

- Motivational interviewing

- Behavioral reduction

Design: 2 × 2 × 2 × 2 = 16 combinations

Key idea: Effects depend on combinations of treatments (interactions)

What if You Can’t Do an Experiment?

When experiments are not possible

- Use observational studies

- Researcher does not assign treatments

- Subjects come as they are

- Strengths:

- Detect real-world patterns

- Generate hypotheses

- Limitation:

- Cannot use randomization

- → greater risk of bias

Observational studies still use good design principles

- Apply as many experimental design features as possible:

- Controls

- Blinding (when possible)

- Replication, balance, blocking

- Key missing feature:

- Randomization

- Biggest challenge:

- Confounding variables

Strategy 1: Matching

- Matching: Pair each treated individual with a similar control

- Match on known confounders:

- Age, sex, weight, background, etc.

- Common in: Case–control studies

- Benefits:

- Reduces bias

- Reduces sampling error (like blocking)

- Limitation:

- Only controls known confounders

Strategy 2: Adjustment

- Adjustment: Use statistical methods to control for confounders

- Example approach:

- Compare groups at the same value of a confounder (e.g., age)

- Methods:

- Regression

- Analysis of covariance (ANCOVA)

- Key requirement:

- Groups must overlap in confounder values

Limits of observational studies

- Observational studies can reveal important patterns

- But without randomization:

- Confounding cannot be fully eliminated

- Strongest inference:

- Experiments > observational studies

- Best use:

- Identify relationships

- Generate hypotheses for experiments

Choosing a Sample Size

Choosing a sample size matters

- Goal: Choose enough samples to get useful results

- Too small:

- Cannot detect effects

- Very wide confidence intervals

- Too large:

- Wastes time, money, and resources

- May raise ethical concerns

- Key question: How many replicates per treatment?

Two ways to plan sample size

- Plan for precision

- Want a narrow confidence interval

- Plan for power

- Want a high probability of detecting a real effect

- Focus here:

- Comparing two means

Planning for precision

- Goal: Estimate the difference in means:

\[ \mu_1 - \mu_2 \]

- Use sample estimate:

\[ \bar{Y}_1 - \bar{Y}_2 \]

- Want a 95% confidence interval with small width

Margin of error drives sample size

- Confidence interval form:

\[ (\bar{Y}_1 - \bar{Y}_2) \pm \text{margin of error} \]

- Margin of error ≈

\[ 2 \times \operatorname{SE} \]

- Standard error:

\[ \operatorname{SE} = \sqrt{\frac{2\sigma^2}{n}} \]

- Larger \(n\) → smaller SE → narrower interval

Sample size formula (approximate)

- Solve for sample size per group:

\[ n \approx \frac{8\sigma^2}{(\text{margin of error})^2} \]

- Interpretation:

- Larger variability (\(\sigma\)) → need larger \(n\)

- Higher precision (smaller margin) → need larger \(n\)

Practical challenge

- \(\sigma\) is unknown

- Use:

- Pilot studies

- Previous research

- Educated guess

- Use:

- Result:

- Sample size planning is approximate

Choosing sample size for power

- Goal: Choose \(n\) so you can detect a meaningful effect

- You must specify:

- Effect size (what difference matters biologically)

- Variability (\(\sigma\); from pilot data or past studies)

- Significance level (\(\alpha\), usually 0.05)

- Desired power (commonly 80%)

- 80% chance of rejecting a false null

- 20% chance of missing a real effect (Type II error)

- How you do it:

- Use software (e.g., R, online calculators)

- Input these values → solve for required \(n\)

- Key idea:

- Larger effect → smaller \(n\)

- Higher variability → larger \(n\)

- Higher power → larger \(n\)

More data improves precision

- Small sample size:

- Very wide confidence intervals

- Increasing \(n\):

- Rapid improvement at first

- Large \(n\):

- Diminishing returns

Diminishing returns

- Precision improves quickly at first:

- e.g., \(n = 2 \rightarrow 5\)

- Then slows:

- e.g., \(n = 15 \rightarrow 20\)

- Each additional replicate adds less new information

- Tradeoff:

- Precision vs cost

Summary of Considerations in Experimental Design

Summary: Designing studies and making inference

Experiments assign treatments and enable causal inference

Bias is reduced through controls, randomization, and blinding

Randomization balances confounding variables on average

Observational studies lack randomization and have weaker inference

Confounding in observational studies is reduced by matching and adjustment

Sampling error is reduced by replication, balance, and blocking

Extreme treatments increase the ability to detect effects

Factorial designs test multiple factors and their interactions

Sample size is planned for precision or power

Study design involves tradeoffs among precision, cost, and feasibility

BIOL 275 Biostatistics | Spring 2026